by Luis Rodrigues

What I built this week + one AI take + four links worth your time.

The Take

A few years ago, I watched developers report bugs via Skype chat instead of using a tracker, because managers were using the tracker to measure performance. Fewer bugs recorded, better reviews.

Now it's happening with AI budgets.

Uber burned through their entire 2026 AI budget in four months. They have engineers spending between $500 and $2,000 per month. 70% of committed code is AI-generated. The Hacker News thread was full of engineers describing token leaderboards at their companies. One said they have a minimum monthly spend. Another one wrote about a colleague who generated 300,000 lines of code in a month, which clearly he didn't read.

A friend at a large European digital bank told me the same thing this week: spending a fortune on Claude Code, not shipping faster.

Now we have Goodhart's Law with billing attached. Token spend is just the new version.

The real waste isn't even gaming the metrics. It's keeping huge context windows without compacting. People say Claude is expensive while having chats with 200k tokens.

That means for every new question, you get charged all those 200k tokens as input.

The difference between $200/month and $2,000/month is often the same work with worse context hygiene.

If you're measuring AI adoption using token spent, you're not measuring productivity. You're measuring the speed your team can burn cash. The metric that matters is what went into products, and real customers used it. Not the amount of AI-generated lines.

If you want some free productivity gains today, check how many engineers in your team have never compacted the context.

Not managing a team? Same principle applies. If you keep burning your weekly usage, you're probably paying for context you should have cleared many prompts ago.

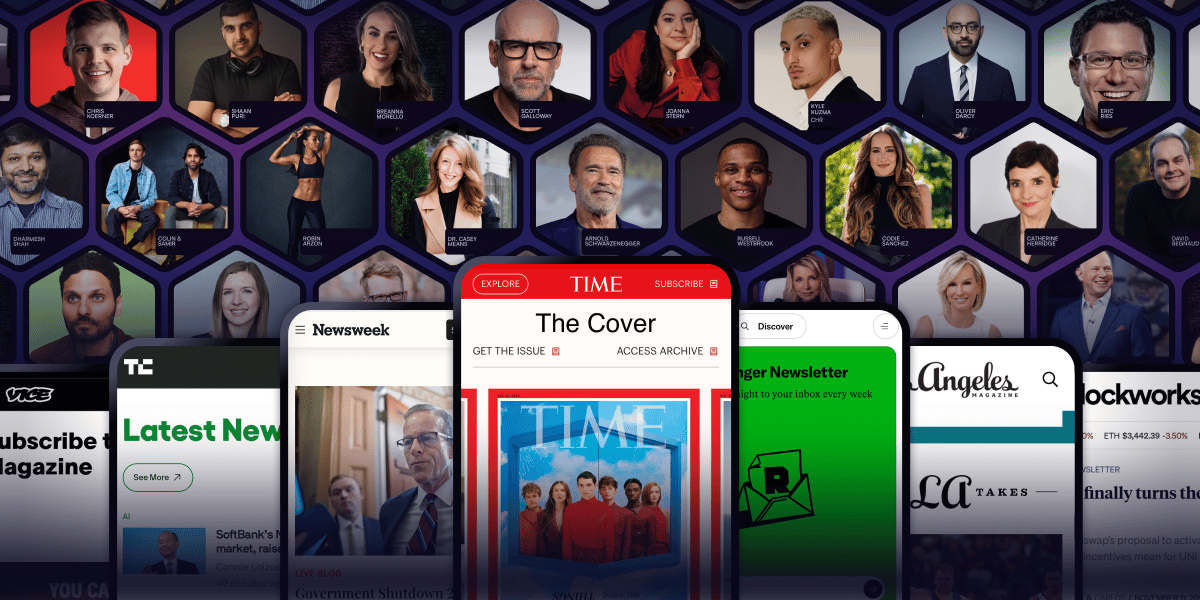

Nobody's asking why Arnold Schwarzenegger has a newsletter.

They're too busy reading it.

Arnold Schwarzenegger. Codie Sanchez. Scott Galloway. Colin & Samir. Shaan Puri. Jay Shetty. They all figured out the same thing: owned audiences compound, rented ones disappear. beehiiv is where they built theirs.

30% off your first 3 months with code PLATFORM30. Start building today.

The Build Log

Last month, a founder slipped into my DMs asking which cloud provider we were using for thoughtled.ai. I said, "Hetzner," and he laughed, "How can you host production on something like that?"

thoughtled.ai runs on Django, React, and Vite. We have 2 servers in Hetzner for high availability, and everything is managed with Coolify.

No Kubernetes. No Vercel. No AWS. The whole infrastructure with high availability for $14/month, less than a lunch in Dubai.

The typical founder playbook is to use AWS/Vercel/whatever so you can "scale". Some end up spending $200/month on an infrastructure with 10 users.

People drink the Kool-Aid of Cloud and get stuck with it, thinking that's the only solution.

Cost isn't even the main reason why I picked boring stack. The main reason is AI. Django has been around since 2005. React since 2013. There are millions of open source repositories, thousands of threads on Stack Overflow, and entire books in today's AI training sets.

This is the same reason the UXPilot → Claude Code pipeline I wrote about last week works in one pass: Claude has seen millions of React components.

Now, try to do the same with a recent and fancy framework. The model will hallucinate APIs and mix versions. You spend more time correcting AI than shipping features.

Boring stacks aren't boring. They're the ones with the deepest training data.

The age of AI is one where having something "well documented" is a competitive advantage when you need to ship quickly.

Coolify handles deployment: push to GitHub, it builds and deploys. No YAML, no DevOps hire.

The trade-off is real. We can't use edge functions, auto-scaling, or serverless. We don't need any of it. Our clients need features and fast loading (edge caching with Cloudflare).

If you're a solo founder or in a small team and you're spending more on infrastructure than 1 lunch a month, ask yourself why you're doing it.

A few servers, a boring stack, and an AI that knows our framework inside out will get you further than any enterprise cloud setup at this stage.

Not building yet? Next time you evaluate a tool, check the framework. If the AI can't write code for it without hallucinating, that's your answer.

On My Radar

$2B ARR, 70% of the Fortune 1000, and they still couldn't outrun negative margins from their own supplier.

OpenAI, Anthropic, and now Google moving to consumption pricing: the shift matters more than the model releases.

Two labs now ship cyber-specific models behind identity gates. If you do pen testing apply before the queue gets long.

If your agent has prod write access, you don't have an AI problem; you have a permissions problem.

What are you building?

Reader Build: George from Dubai is building HAILO. He's a recruiter now building with AI. He's building a platform that handles the full hiring pipeline from intake to onboarding. Cutting time-to-hire by reducing the manual triage where teams waste time checking spreadsheets and lists of candidates, while surfacing candidates that keyword filters would miss. → hailotalent.com

Got something you built? Hit reply, the best ones get featured next week.

Know someone still doing that who needs to learn about boring stacks? Forward them this.

New here? Someone forwarded this to you? → Subscribe to Build What Matters: https://luisrodrigues.ai/

How was this issue?

P.S. Subscribers get $5K+ in software credits through our Secret partnership (AWS, Loom, Notion, Lovable, and more). Haven't claimed yours yet? → https://build-what-matters.joinsecret.com/